How to Evaluate Healthcare AI Vendors: The Buyer's Checklist Operations Teams Need

Every buyer's guide to healthcare AI is written by vendors selling healthcare AI, which makes them useless. This is the vendor-agnostic version for the operations director or VP who needs to make a decision in 90 days.

Loading audio...

Every buyer's guide to healthcare AI is written by vendors selling healthcare AI, which makes them useless. This is the vendor-agnostic version for the operations director or VP who needs to make a decision in 90 days.

There are now several hundred healthcare AI vendors in 2026, up from a few dozen three years ago. The market is consolidating fast, with Healthcare Dive documenting accelerating M&A activity through the year. The proliferation and the consolidation produce the same problem for buyers: how do you tell which vendors will be running your workflow in 18 months, and which are going to be acquired, pivoted, or quietly wound down?

Buyer's guides published by vendors are a poor starting point. They answer the wrong question (why you should pick them) rather than the right one (how to make the decision).

This is the version written for operations directors, VPs of operations, COOs, and CFOs who have to sign the contract, own the outcome, and explain the decision to the board. Eight evaluation criteria. The questions to ask on each. The red flags that should kill a vendor conversation quickly. And the RFP scoring rubric to bring into vendor calls.

Criterion 1: EHR integration depth

The single most important question in any healthcare AI evaluation is whether the system integrates with your EHR in a way that does what you need.

Integration is not binary. There are four meaningful tiers.

Tier 1: Read-only integration. The AI can read patient records, scheduling data, and demographics from your EHR. It cannot write anything back. Useful for analytics. Inadequate for operational automation.

Tier 2: One-way write integration. The AI can create appointments, chart notes, or task assignments in the EHR, but through a batched or delayed process rather than real-time. Adequate for outbound batch workflows. Inadequate for real-time workflows.

Tier 3: Bidirectional real-time integration via API. The AI reads current state and writes changes in real time across the key EHR objects (patient, appointment, order, task, chart). This is the baseline for production operational automation.

Tier 4: Bidirectional integration plus structured data exchange. The AI not only reads and writes but does so using standardized data formats (FHIR, HL7, X12) that preserve structure and enable clean handoffs downstream. This is what you want for enterprise-scale or multi-EHR deployments. Our take on the depth question lives in AI voice scheduling and EHR integration.

Questions to ask: Which tier of integration do you offer on [my EHR]? Is it via API, scraping, or HL7 feed? What EHR objects do you read and write? Do you support bidirectional real-time? Can I see the integration running in a live customer deployment on my specific EHR, not a demo environment?

Red flag: “We integrate with every EHR” without specificity about tier and objects. “We have a partnership” without a production deployment. Evasiveness on whether integration is via API or scraping.

Criterion 2: Workflow scope

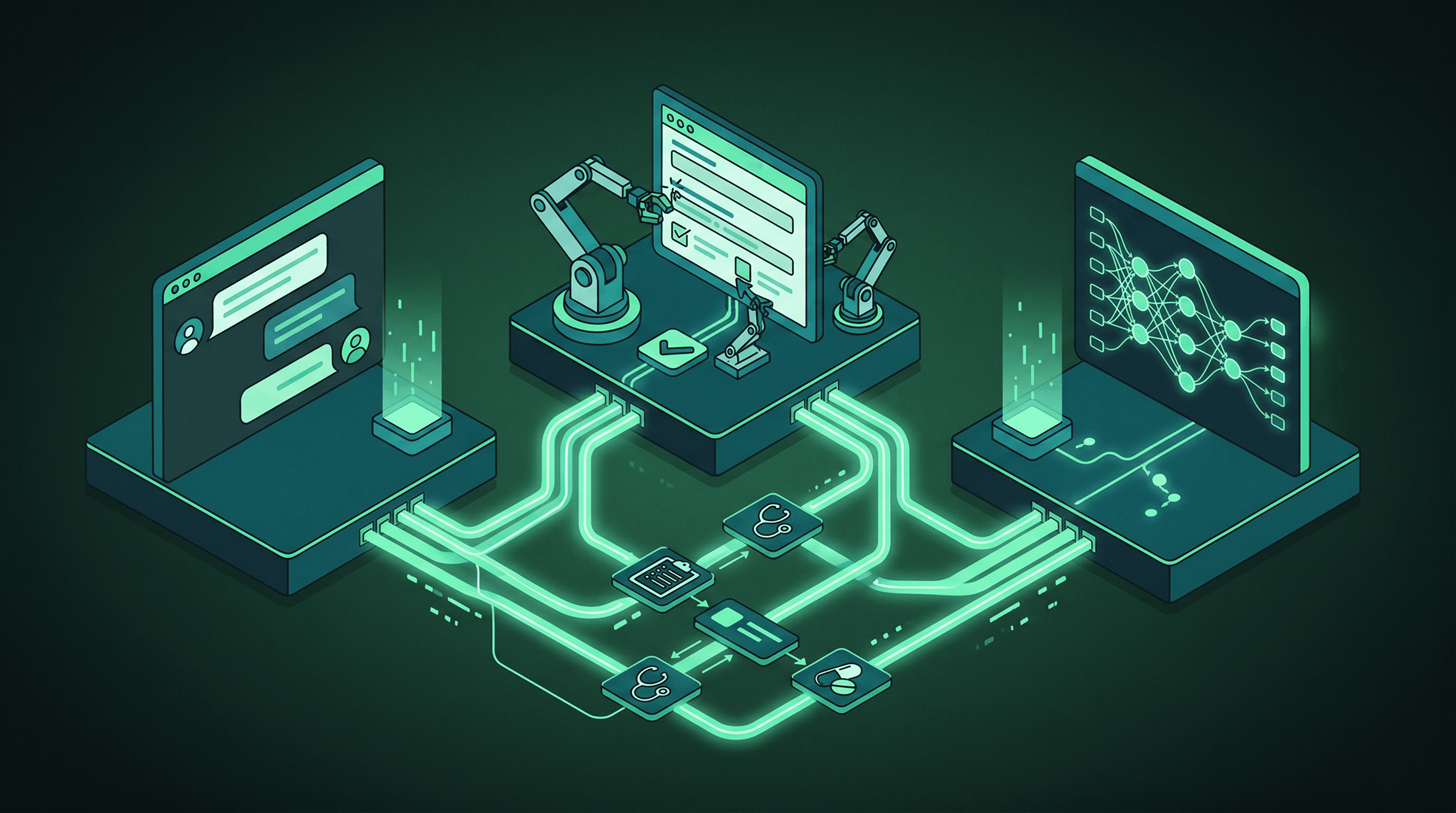

Does the vendor handle a single task or an end-to-end workflow?

“Single task” vendors are point solutions. They handle one step (eligibility verification, fax intake, scheduling, prior auth submission) and hand off to the next system. If your goal is to replace three coordinators with one platform, single-task vendors require you to stitch together several vendors, which recreates the coordination overhead.

“End-to-end workflow” vendors handle a full process from trigger to resolution. For referral coordination, that means fax/EHR intake through scheduled appointment through closed-loop documentation. For prior authorization, that means order through submission through approval through scheduling. For care gap closure, that means identification through outreach through visit completion through quality reporting. The category map across agents, chatbots, and RPA is in AI agents vs chatbots vs RPA in healthcare.

End-to-end doesn't have to mean monolithic. Some of the strongest vendors are deep in two or three workflows and thin elsewhere. That's fine. Just know which end-to-ends they cover.

Questions to ask: What workflows do you cover end to end? At what points do you hand off to human staff? Walk me through a specific referral (or PA, or care gap case) from trigger to close. What percentage of cases does your system complete end to end without human involvement? Show me the workflow running in a production deployment.

Red flag: Inability to describe the workflow without jargon. “End to end” claims that turn out to mean “end to end within our product” but require human handoff to complete the actual business process.

Criterion 3: Autonomy level

How much of the work does the system do on its own, and how is human oversight configured?

The operations goal is typically not full autonomy. It's calibrated autonomy that puts humans in the loop where judgment matters and lets the system run autonomously where it doesn't. The right configuration depends on the workflow.

For patient outreach, autonomous send with human-reviewed templates is usually fine. For inbound fax intake, autonomous extraction with human review of low-confidence cases is standard. For prior auth submission, human review of clinical summaries before submission is typical in the first 60 to 90 days, tapering as confidence builds. For scheduling with complex eligibility rules, autonomous scheduling within configured guardrails with human review of edge cases is the norm.

Vendors that offer only “always autonomous” or “always human-in-the-loop” are both wrong. You want configurable autonomy per workflow, with confidence thresholds and escalation paths that your team can tune over time.

Questions to ask: How is autonomy configured per workflow? Can I set confidence thresholds that route exceptions to human review? What does the exception queue look like for my staff? How does the system explain its reasoning when it escalates? How is tuning done over time?

Red flag: “The AI handles everything” with no specifics on oversight. Inability to show the exception queue or describe the escalation experience.

Criterion 4: Time to value

How long does it take to get from contract signature to measurable results?

Standard benchmarks in 2026 for purpose-built healthcare AI platforms:

- First workflow live: 4 to 8 weeks

- Full deployment of primary workflows: 8 to 16 weeks

- Measurable operational metrics (volume processed, exception rate): 30 to 60 days after go-live

- Measurable revenue metrics (completion rate, no-show rate, revenue per referral): 60 to 120 days after go-live

Deployments that stretch past 16 weeks are usually not a vendor capability problem but an integration or stakeholder alignment problem on the buyer's side. That said, vendors with stronger implementation teams hit the shorter end of the ranges consistently.

Questions to ask: What's your standard go-live timeline on my EHR? Who owns what during implementation (vendor team, our team, third parties)? Show me the implementation plan in detail. What happens if we blow past the timeline?

Red flag: “Go-live in one week” for anything other than a very narrow workflow. Six-plus month implementation timelines for operational AI (this is EHR-vendor implementation, not AI implementation).

Criterion 5: Compliance posture

HIPAA, SOC 2, and security reviews are not the interesting part of the evaluation, but they are the gate.

Minimum bars for 2026:

- Signed BAA template available for review before contract

- SOC 2 Type II report (not Type I, and not “SOC 2 in progress”) available for review under NDA

- Encryption at rest and in transit for all PHI

- Role-based access controls and audit logs

- Documented breach notification procedures

- Subprocessor list with BAAs in place for each subprocessor that touches PHI

- Documented data retention and deletion policies

If the vendor can't produce these within the first two meetings, keep looking.

Questions to ask: Share your BAA template and SOC 2 Type II report. What's your subprocessor list? How do you handle PHI in voice calls (recording, transcription, storage)? What's your data retention policy? Your breach notification procedure? Your disaster recovery posture?

Red flag: Vague answers on BAA terms. “SOC 2 in progress” with no timeline. Subprocessors without BAAs. Opaque data retention.

Criterion 6: Measurable ROI

Vendors who cannot commit to measurable ROI are vendors who don't believe in their own product.

The right ROI conversation has four elements: the metric that will improve (referral completion rate, PA turnaround time, no-show rate, AWV completion), the expected magnitude of improvement, the baseline measurement protocol, and the timeline by which you should see it.

Strong vendors will commit to ROI language in the MSA or a separate performance attachment. Average vendors will point to case studies. Weak vendors will tell you ROI is “hard to predict.” For a worked example see the ROI of AI referral automation.

Questions to ask: What metrics will improve? By how much? How will we measure the baseline? What's the expected timeline? Are you willing to put performance language in the contract? What happens if the system doesn't hit expected performance?

Red flag: Refusal to commit to specific metrics. Case studies without named customers or named numbers. “Every practice is different” as a deflection.

Want a one-page RFP scoring rubric?

We've built a one-page scoring rubric based on this framework. Book a 15-minute walkthrough and we'll send it over.

Criterion 7: Escalation design

When the AI fails, what happens?

Every AI system fails some percentage of the time. The question is what the failure looks like from the patient's perspective and the coordinator's perspective. The right design has three properties.

First, graceful escalation. When the AI encounters something it can't resolve, the patient (or the workflow) is handed to a human with full context, not dropped or looped back to the AI.

Second, full context preservation. The coordinator picking up the escalation sees what the AI saw, what it tried, and why it stopped. Not a cold handoff.

Third, feedback loops that improve the system. When a human resolves an escalated case, the resolution is captured and becomes training data for future cases. The AI doesn't keep making the same mistake. For where this breaks in voice see voice AI in healthcare: what works and what breaks.

Questions to ask: Show me an escalation in action. What does the patient experience? What does the coordinator see? How is the resolution captured? How does the system get better over time?

Red flag: “The AI never fails.” (No system is 100 percent.) Handoffs that lose context. No feedback mechanism.

Criterion 8: Vendor stability

Healthcare is a long-term business. Your AI vendor needs to be around in three to five years.

Signals of stability: clear funding (public or well-capitalized private), diversified customer base (not two anchor customers), customer retention north of 90 percent, public leadership team with healthcare experience, product roadmap that's consistent across multiple quarters.

Signals of instability: heavy concentration in one customer segment that's consolidating, high leadership turnover, roadmap changes that follow funding events, unwillingness to share customer count or retention data, no named customers in your specific segment.

Questions to ask: How many customers do you have? What's your retention rate? Can I speak to a reference customer in my segment? What's your funding position? What's the leadership team's healthcare experience? Where's the roadmap going?

Red flag: Unwillingness to share customer count. No references in your segment. Leadership team with no prior healthcare exits.

What does good look like against all eight criteria?

A strong healthcare AI vendor in 2026 will score as follows against the eight criteria.

| Criterion | Strong vendor | Weak vendor |

|---|---|---|

| EHR integration depth | Tier 3 or 4 on your specific EHR, live in production | Tier 1 or 2, or “coming soon” |

| Workflow scope | End-to-end on the workflows that matter to you | Point solution requiring stitching |

| Autonomy level | Configurable per workflow, clear escalation queues | Fixed at one end of the spectrum |

| Time to value | 4–8 weeks first workflow, 60 days measurable ops metrics | 12+ weeks or refusal to commit |

| Compliance posture | BAA, SOC 2 Type II, encryption, subprocessor BAAs, available pre-signature | Gaps on any of the above |

| Measurable ROI | Specific metrics, committed timeline, willing to contract on it | Case studies only, no contractual commitment |

| Escalation design | Graceful handoffs with full context, feedback loops improving the system | Cold handoffs or silent failures |

| Vendor stability | Growth funding, 90%+ retention, named references in your segment | Opaque on customers and retention |

Linear Health is one example of a vendor that has been positioned around these criteria specifically, with four-week go-live on supported EHRs, bidirectional Athena-native integration, configurable autonomy by workflow, and named reference customers across specialty practices, FQHCs, and behavioral health. The checklist is the point, not the example. Any vendor should be evaluated against these criteria, and the ones that pass are the ones worth pursuing.

What RFP questions should you bring to vendor calls?

A practical subset of questions for your next vendor call, organized by criterion.

Integration: What tier of integration do you offer on [my EHR] and which objects are bidirectional? Can I see it running in a live customer deployment on my EHR?

Workflow scope: Walk me through [specific workflow: referral intake, PA submission, care gap closure] from trigger to close, describing every system touched. What percentage of cases do you complete end to end?

Autonomy: Show me the exception queue for my staff. How are confidence thresholds configured? Can we tune them?

Time to value: What's the go-live timeline for my EHR and my first workflow? Who owns what? What happens if the timeline slips?

Compliance: Send me your BAA template and SOC 2 Type II report under NDA. Who are your subprocessors and do they have BAAs?

ROI: Which metric will improve? By how much? How will we measure baseline? Over what timeline? Will you put performance language in the contract?

Escalation: Show me an escalation live. What does the patient experience? What does the coordinator see?

Stability: How many customers? Retention rate? Three references in my segment. What's your funding position and runway?

What are the red flags that should kill a vendor conversation?

Fifteen minutes into a vendor conversation, you can usually tell. The patterns:

- Can't share customer references in your segment. “We have lots of customers but they're all under NDA” is a tell for either no customers or no relevant customers.

- Promises full automation day one. Healthcare AI has a tuning curve. Any vendor claiming zero ramp is either inexperienced or lying.

- Avoids integration specifics. “We integrate with everything” without specificity on tier, objects, and live deployments.

- Refuses to commit to ROI. A vendor that won't commit to a measurable outcome doesn't believe in their own product.

- SOC 2 “in progress” for more than 12 months. Usually a signal of inadequate security investment.

- No named leadership with healthcare experience. General-purpose enterprise software experience is not a substitute for healthcare operational fluency.

- Demos that only show happy paths. Every production deployment has edge cases. Vendors who can't show edge cases haven't stress-tested their product.

- Promises that map too closely to everything you said on the call. If the product does exactly what you described, the vendor is probably describing a roadmap.

“What I wanted to know was not whether the demo looked impressive. I wanted to know whether the system would still be running my workflow in two years. That's a completely different evaluation, and it's the one that's usually skipped.”

Best fit and less ideal fit for this framework

This evaluation framework fits best for: operations directors and COOs evaluating healthcare AI vendors for coordination, scheduling, prior auth, care gap, or voice AI workflows. CFOs doing vendor selection alongside ops leaders. VPs of revenue cycle evaluating automation for claims, PA, or coordination.

Less ideal for: evaluating clinical AI (diagnostic, imaging, ambient scribing) where the evaluation criteria skew more toward clinical accuracy and FDA posture. Evaluating pure analytics platforms (population health, risk stratification) where the workflow execution criteria are less relevant.

Frequently asked questions

How long should a healthcare AI vendor evaluation take?

For a platform that will run a primary operational workflow, 60 to 90 days is a reasonable evaluation window. Longer than 120 days typically signals indecision on your side. Shorter than 45 days typically signals inadequate diligence. The steps within the window: vendor shortlist, initial demos, deeper workflow demos on your data, reference calls, security review, integration confirmation, contract negotiation.

Should we pilot before full contract?

Pilot programs are useful for validating integration and real-world performance before full commitment. Structure the pilot with explicit success criteria tied to a measurable metric, a defined scope, and a contract off-ramp if the pilot doesn't hit criteria. Unbounded pilots tend to drift into zombie projects.

Who should be in the evaluation committee?

Operations lead (decision owner), IT or CIO (integration validation), clinical lead (workflow impact), revenue cycle or finance lead (ROI validation), compliance officer (HIPAA and security), and ideally a champion from the end-user team that will operate the system daily. Committees larger than six typically slow decisions without improving them.

How do we avoid being dazzled by demos?

Two tactics. First, always ask for the demo to run on your data, not the vendor's sample data. Vendors with production-grade systems will accommodate this with a few days of setup. Vendors with brittle systems won't. Second, ask to see the system handle failure modes: an unreadable fax, a patient who hangs up mid-call, an insurance card that doesn't match the record, a scheduling conflict. How a system handles exceptions is more diagnostic than how it handles happy paths.

Should we build internally instead of buying?

For specific narrow automations, building internally can make sense if you have engineering capacity and the workflow is stable. For broad operational automation (end-to-end referral coordination, multi-payer prior auth, voice AI integrated with your EHR), building internally typically costs more than the buy option and takes 3 to 5 times longer, and the maintenance burden after launch is substantial. Most health systems that started on build paths in 2022-2023 have since moved to buy or hybrid approaches.

Pulling it together

Healthcare AI vendor evaluation in 2026 is no longer a question of whether AI can do the work. It's a question of which vendor will deliver on your EHR, with your workflows, on a timeline your team can execute. The eight criteria in this framework are the ones that separate vendors who will deliver from vendors who will disappoint.

If you want to see how a specific vendor stacks up on all eight criteria on your actual workflow, the right move is a structured demo on your data. Let us know if that would be useful.

See how a vendor scores on all eight criteria, on your data.

We'll run our platform through the same checklist on your EHR, your payer mix, and your workflow. Book a 15-minute demo.

Sami scaled Simple Online Healthcare to $150M and built a multi-specialty telehealth clinic across 20 specialties and all 50 states. Connect on LinkedIn.