AI Agents vs Chatbots vs RPA in Healthcare: What's the Difference, and Which Should You Use?

Three technologies get grouped together as "healthcare AI" in vendor pitches. They do fundamentally different things. Here's the operational breakdown and the decision framework for which one your practice actually needs.

Loading audio...

Three technologies get grouped together as “healthcare AI” in vendor pitches. They do fundamentally different things. Here's the operational breakdown and the decision framework for which one your practice needs.

The terminology around healthcare AI has gotten sloppy. A vendor will pitch you an “AI agent” that on inspection turns out to be a chatbot with better marketing. Another will pitch “RPA” as if it's the same category as agentic AI. A third conflates all three.

For an operations leader evaluating AI technology in 2026, the confusion isn't academic. It determines whether you buy a tool that will close referral loops and reduce coordinator load, or one that sits on top of your existing workflow and adds a new interface your team has to manage.

This is the version written by operators for operators. Three technologies, three use cases, and a decision matrix for which one fits which problem.

What is RPA in healthcare?

Robotic Process Automation (RPA) is a rule-following macro. It mimics the clicks and keystrokes a human would do, following a fixed script. If your coordinator logs into the Anthem portal, types in a patient's ID, clicks “check status,” reads the result, and pastes it into your EHR, RPA can do that same sequence thousands of times a day without fatigue.

What RPA does well: repetitive, structured, rules-based tasks with predictable inputs. Portal logins. Data entry between systems. Basic claim status checks. Eligibility verification from standard payer formats. It runs 24/7, doesn't need breaks, and is cheap per transaction once built.

Where RPA breaks: anywhere the script meets the unexpected. A portal that adds a new pop-up, a payer that changes its interface, a referral that arrives in a slightly different format, a patient response that doesn't match the script. RPA is brittle in exactly the places healthcare is messy.

In practice, RPA has been useful in healthcare for about a decade but has been losing ground to more adaptive technologies. A 2024 BCG analysis of agentic AI in healthcare noted that RPA implementations typically cover 10 to 20 percent of a target workflow and require constant maintenance as source systems change. For structured, narrow tasks, it still works. For end-to-end workflow automation, it doesn't scale.

What is a chatbot in healthcare?

A chatbot is a conversational interface. It takes in natural language input and produces natural language output, usually by matching user intent to a pre-built answer or routing the user to a staff member. The better chatbots in 2026 use large language models to handle more conversational flexibility, but the core function is the same: take a question, give a response.

What chatbots do well: FAQ handling. Basic triage (route the patient to scheduling, billing, or clinical). Appointment reminders in a conversational format. Intake forms that adapt based on patient answers. Patient education delivery.

Where chatbots break: they answer and route. They don't execute. A chatbot can tell a patient “I can help you schedule an appointment” and collect the information, but unless it's wired into the scheduling system it hands off the request to a human. The patient thinks something happened. The coordinator queue thinks nothing has happened. Both are right.

The bigger issue is that chatbots are a conversational layer, not a workflow layer. They are good at the moment of interaction and bad at everything that needs to happen after the moment of interaction. For practices evaluating AI, a chatbot-only solution creates the illusion of automation without the execution. Our deeper map of where conversational AI works in healthcare lives in conversational AI in healthcare.

What is an AI agent in healthcare?

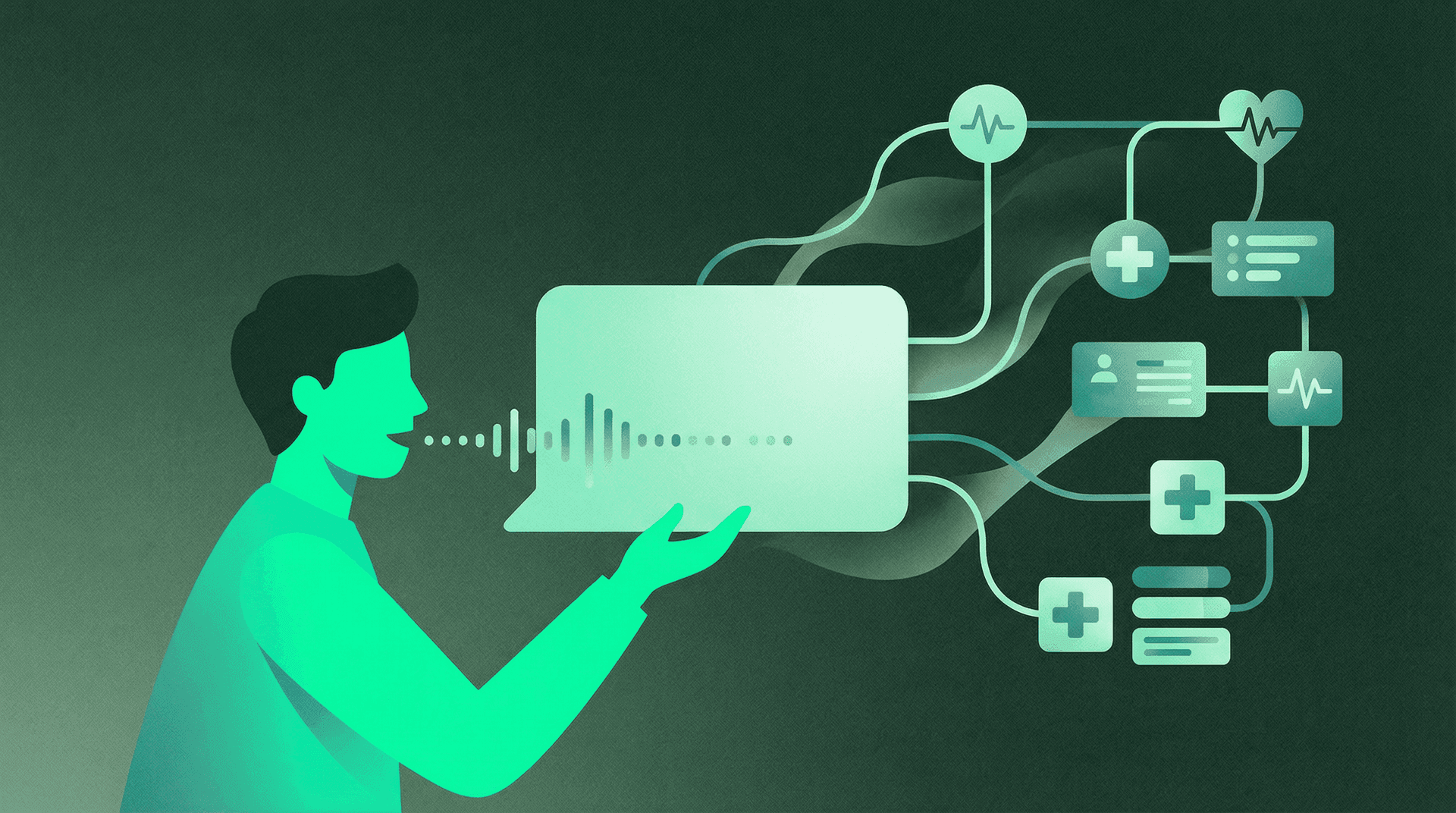

An AI agent is autonomous multi-step workflow execution. It takes in an input (a fax, a patient call, an EHR trigger, a payer file), decides what steps are needed, executes those steps across multiple systems, handles exceptions, escalates when needed, and closes the loop.

Here's what that looks like concretely for an inbound specialty referral. An AI agent receives the referral fax. It extracts patient demographics, insurance information, and the clinical reason for referral. It verifies eligibility with the payer in real time. It creates the patient chart in the EHR. It contacts the patient by SMS with a self-scheduling link in the patient's preferred language. If the patient doesn't respond, it follows up by voice. When the patient books, it writes the appointment back to the EHR calendar. It tracks the referral through completion and sends a closed-loop notification to the referring provider. You can see the full referral agent on the inbound referral coordination solution.

That is not a chatbot. That is not RPA. That is workflow execution, spanning six or seven systems, handling exceptions, making decisions about routing, and completing without human involvement in the routine cases.

The operational test for whether something is an AI agent is this: if the human has to do the next step, it's not an agent. If the system handles the workflow end to end and only escalates exceptions, it is.

How do these three technologies compare side by side?

| RPA | Chatbot | AI Agent | |

|---|---|---|---|

| What it does | Executes fixed scripts of clicks and data entry | Answers questions, routes conversations | Executes multi-step workflows autonomously across systems |

| How it works | Rule-based UI automation | Intent matching, sometimes LLM-powered conversation | LLM-powered reasoning + tool use + orchestration |

| Healthcare example | Automated eligibility check against payer portal | Answers “are you open Saturdays?” and routes scheduling requests | Reads referral fax, verifies insurance, contacts patient, books appointment, closes loop |

| Best use case | Narrow, repetitive tasks with structured inputs | Patient-facing FAQ, basic intake, triage routing | End-to-end workflow coverage (referral, PA, outreach, scheduling) |

| Limitations | Breaks on UI changes, no exception handling, brittle at scale | Answers but does not execute, requires human to take next action | Requires deeper integration, higher upfront config, higher value |

| Typical ROI timeline | 3 to 6 months for narrow tasks, maintenance burden over time | 2 to 4 months for call deflection and basic triage | 4 to 12 months for coordination workflows, durable ROI |

| What it replaces | Specific repetitive tasks inside a role | Tier-1 support or receptionist routing | Routine 80% of coordinator, scheduler, PA specialist workload |

| Integration depth | Surface-level (UI automation) | Light (messaging channels, sometimes EHR via chat) | Deep (EHR bidirectional, payer APIs, telephony, SMS, fax) |

The practical implication is that these three technologies solve different problems. An organization trying to automate its end-to-end coordination workflow by buying a chatbot will be disappointed. An organization looking to automate a single narrow task like eligibility checks is probably better served by RPA than by an AI agent platform.

Ready to see an AI agent run a real referral workflow?

We'll show you the fax-to-scheduled-appointment path on your specific workflow, on your actual EHR. Book a 15-minute demo.

What's the decision framework for choosing the right technology?

For an operations leader, the decision matrix looks like this.

If the problem is narrow, repetitive, and structured (eligibility verification on a stable portal, claim status checks on a standard format), RPA is cheaper and faster to deploy. The caveat is that you will carry a maintenance burden over time.

If the problem is conversational and low-execution (patient FAQ, basic triage, appointment reminder conversations, intake forms), a chatbot is the right tool. Don't expect it to handle workflows.

If the problem is multi-step workflow coverage across systems (referral intake through appointment completion, prior auth submission through approval, care gap identification through closure), you need an AI agent platform. Neither RPA nor chatbots will cover this. The short-term cost is higher and the integration is deeper, but the ROI is durable and scales. For the dollar-level math see the ROI of AI referral automation.

If the problem is “all of the above,” which is the honest answer for most specialty practices and primary care groups in 2026, you want an AI agent platform that can incorporate RPA-style execution for structured tasks and chatbot-style conversation for patient-facing interactions, with the agent orchestration layer running end to end. Platforms that force you to stitch together three vendors for this create the same coordination overhead you were trying to eliminate.

Where do EHRs fit in this picture?

The common objection from practice leaders is “we already have an EHR with automation features, why do we need AI agents?” It's a fair question, and the honest answer matters.

Modern EHRs (athenahealth, Epic, Cerner, eClinicalWorks) have added workflow automation over the past several years. They can auto-route messages, trigger reminders, flag overdue tasks, and run basic rules. Some have integrated LLM features for documentation summary and patient communication drafts.

What EHRs don't do, because they're not designed to, is autonomous multi-step workflow execution across systems your EHR doesn't own. Your EHR doesn't read inbound faxes from a third-party fax service, extract structured data from unstructured documents, execute prior auth submission to 40 different payer portals, place outbound phone calls with conversational AI, or close the loop with a referring provider on a different EHR. Those are agent jobs. See AI voice scheduling and EHR integration for the depth question in detail.

The right mental model is that the EHR is the system of record, and the AI agent is the system of work. They coexist. A well-designed agent platform writes back to the EHR bidirectionally so that every action the agent takes is recorded in the patient chart. The EHR is where the data lives. The agent is where the work gets done.

What are the governance requirements for each?

Healthcare AI governance is not uniform across the three technologies. The depth of oversight should match the depth of autonomy.

RPA governance is mostly about change management and error monitoring. When a portal changes, your scripts break. When scripts break, things downstream break. Governance means monitoring, alerting, and version control over scripts.

Chatbot governance is mostly about content and escalation. What can the chatbot say? When does it hand off? Is the handoff traceable? HIPAA compliance is a BAA and encryption question. Most chatbot deployments are lower-risk because the chatbot isn't doing anything the patient couldn't have done themselves with a static FAQ page.

AI agent governance is a meaningfully different conversation. Because the agent executes actions on behalf of the organization, you need audit trails for every decision, confidence thresholds that trigger human review, escalation paths that preserve context, data residency controls, SOC 2 Type II verification, BAAs, and clear guardrails for what the agent can and cannot decide. In 2026, governance is the gate between pilot and production for most healthcare AI agent deployments.

What does good implementation look like?

Across deployments in specialty and primary care settings, the common pattern for a successful AI agent rollout follows a few principles.

Integration first, interface second. Before configuring any agent workflow, the integration to the EHR, payer systems, and telephony should be solid. Agents that run on partial integration produce partial outputs that create new coordination work.

One workflow at a time. The practices that try to launch six agent workflows in the same quarter run into the same organizational resistance that killed their earlier automation attempts. Start with the highest-volume, clearest-ROI workflow (usually patient outreach or referral intake), prove it, then expand.

Human-in-the-loop by default, autonomous by configuration. Every agent workflow should start with human review of every exception, and the escalation threshold should be tuned down as confidence in the agent grows. Going autonomous before the system has been tuned is how you end up with a bad patient experience that makes its way to leadership at the worst possible moment.

Metrics that matter. Track volume processed per FTE, exception rate, time from trigger to resolution, patient satisfaction, and the revenue metric most tied to the workflow (referral completion rate for referral agents, no-show rate for scheduling agents, PA approval time for PA agents).

“What I wanted to know was not whether the AI was smart. I wanted to know whether it actually did the work. That's a different question, and most vendors couldn't answer it until we asked to see the workflow run on our own data.”

Best fit and less ideal fit

AI agent platforms fit best for: specialty practices running high-volume coordination workflows (referral-heavy, PA-heavy, outreach-heavy), multi-location groups where coordination failures compound across sites, FQHCs and CHCs juggling quality reporting and care gap outreach at scale, behavioral health groups where referral follow-through is structurally broken. The natural readers here are leaders at PE-backed multi-location groups.

AI agent platforms are less ideal for: single-provider practices where workflow volume doesn't justify agent configuration overhead, organizations on legacy EHRs without API access where deep integration isn't available, practices that need narrow point solutions for a single task (use RPA or a targeted chatbot instead).

Frequently asked questions

Is an AI agent the same thing as a chatbot with better marketing?

No. A chatbot is a conversational interface that answers and routes. An AI agent is autonomous workflow execution that performs actions across multiple systems. The functional difference shows up in whether the system finishes the task or hands it to a human to finish. If the human has to do the next step, it's a chatbot. If the system closes the loop, it's an agent.

Is RPA obsolete in healthcare?

Not obsolete, but narrower in scope than it was five years ago. RPA still works for structured, repetitive tasks on stable systems. For end-to-end workflow coverage, AI agents have displaced RPA because they handle the exceptions and variability that RPA can't. Most 2026 deployments use RPA inside an agent framework, not as a standalone category.

How do I know if a vendor is selling me an actual AI agent or a chatbot in disguise?

Ask three questions. Does the system execute actions in my EHR, payer portal, or scheduling system without human hand-off? What percentage of the workflow does it complete end to end in production deployments? Can I see a live customer deployment, not a demo, running the workflow I care about? Vendors with actual agent capability will answer these concretely. Vendors selling a chatbot will redirect to “how natural it sounds” or “how many integrations are on the roadmap.”

What happens when the AI agent encounters something it can't handle?

A well-designed agent escalates with full context to a human reviewer. The exception goes into a staff queue with a summary of what the agent tried, what it saw, and why it stopped. The human resolves the case, and the resolution becomes training data for the next encounter. The wrong design is an agent that silently fails or a chatbot that pretends the task is done when it isn't.

How long does an AI agent deployment take in healthcare?

For a purpose-built healthcare AI agent platform with a supported EHR, deployment on the first workflow typically runs 4 to 8 weeks. Additional workflows on the same platform add 2 to 4 weeks each once integrations are established. Deployments that are struggling at the 12-week mark usually have an underlying EHR or data access problem, not an agent problem.

Pulling it together

The distinction between RPA, chatbots, and AI agents matters because it shapes the buying decision, the implementation expectation, and the operational outcome. Three technologies, three use cases, and a decision framework that starts with the problem rather than the demo.

If you want to see what an AI agent looks like on a real coordination workflow running on your EHR, we'd be happy to walk you through it.

See an AI agent run your referral or PA workflow end to end.

Book a 15-minute walkthrough. We'll demo the fax-to-scheduled-appointment or PA-to-approval path on your EHR and your payer mix.

Sami scaled Simple Online Healthcare to $150M and built a multi-specialty telehealth clinic across 20 specialties and all 50 states. Connect on LinkedIn.