Voice AI in Healthcare: Where It Works, and Where It Still Breaks

A vendor demo shows a voice AI booking a dermatology appointment in 45 seconds. Six months later, one in three of those implementations has stalled. Here's the honest map of where voice AI works, where it breaks, and how to tell the difference before you buy.

Loading audio...

A vendor demo shows a voice AI booking a dermatology appointment in 45 seconds, handling an insurance question, and rescheduling a follow-up. It sounds polished. It sounds ready. Six months later, roughly one in three of those implementations has stalled or rolled back.

The voice AI didn't fail because the technology is bad. The technology is good and getting better every quarter. It stalled because the vendor sold a demo that papered over the use cases voice AI still handles poorly, and the practice discovered those cases the hard way: on live patient calls that didn't fit the happy-path script.

This piece is the honest map. Four use cases where voice AI is already the clear winner over staff. Three where it consistently fails in 2026 and will keep failing for another 12 to 24 months. The integration gap that separates real voice AI from a voice-enabled answering service. And a framework operations leaders can use to evaluate vendors before they buy.

What do the benchmarks say voice AI can and can't do in 2026?

Call resolution rates for well-configured deployments sit between 80 and 85 percent. One in every five to six calls still requires a human handoff, not because the AI is broken, but because the scope of what can be resolved autonomously has a real ceiling that vendors don't like to advertise.

No-show reduction runs 35 to 40 percent against the 18 to 25 percent industry baseline, holding across specialty types. Time to first patient contact on inbound referrals drops from 3 to 7 business days down to under 5 minutes, which is the single biggest swing factor in referral-to-appointment conversion.

Gartner forecasts that 80 percent of healthcare providers will invest in conversational AI by 2026, and the market is already there. The gap between investment and operational payoff depends on picking the right use cases to start with, and being honest about which ones to leave alone until the technology catches up.

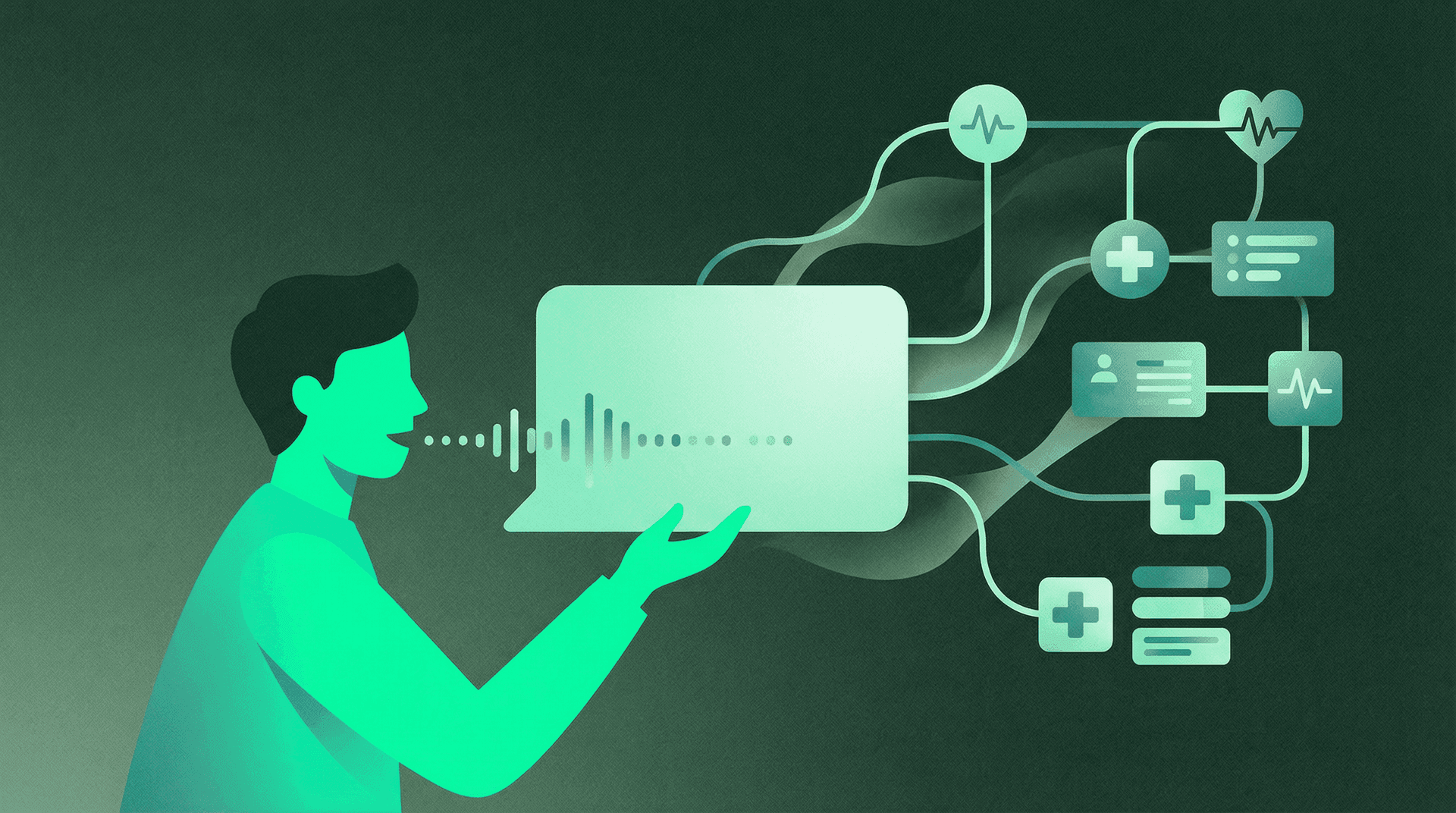

Which four healthcare use cases does voice AI handle better than staff today?

Four use cases where voice AI is already the clear operational winner. In each, the work is high-volume, pattern-based, outside normal staffing hours, or some combination. All four share a structural feature: the patient interaction is narrow in scope, and the information required to resolve it lives in the EHR.

After-hours scheduling for specialty practices

A patient calls at 9 PM on a Tuesday to book a dermatology follow-up. With a traditional answering service, the voicemail gets picked up the next morning, a coordinator calls back by noon, the patient is at work, phone tag begins, and the booking happens Thursday at the earliest. With voice AI that has real-time EHR integration, the patient speaks the request, the AI matches the chart, sees the schedule, books a slot, confirms, and writes back to the PMS. Confirmed appointment before they hang up.

After-hours call volume in specialty practices typically runs 15 to 25 percent of total inbound volume. A practice that converts those calls rather than losing them picks up meaningful schedule utilization without adding headcount.

Appointment confirmation and reminder calls

The highest-volume, lowest-complexity outbound task in most practice operations. 45-second calls. Five possible outcomes: confirmed, cancelled, rescheduled, no answer, wrong number. Small decision tree, low failure cost. Practices running automated voice confirmations on top of SMS typically see confirmation completion rates rise from 60 to 65 percent to above 85 percent. The lift comes from patients who don't respond to text but will answer a phone call if the caller ID reads like a local business.

Recall and reactivation outreach

Every practice has patients overdue for a follow-up, annual exam, care gap closure, or specialty referral they never booked. At most practices, that list doesn't get worked. Not because nobody wants to, but because the math doesn't support a coordinator spending 20 hours a week calling patients who are hard to reach.

Voice AI changes that math. A coordinator calling through a 1,000-patient recall list might reach 300 dials in a week. A voice AI runs through the full list in two days, adapts to patient-preferred contact hours, and persists across SMS and voice. Contact rate rises, cost per contact drops by an order of magnitude.

FQHCs and primary care groups running care gap closure programs see the sharpest lift here. HEDIS and quality incentive payments move on the back of screenings that get booked because the outreach happened at all. Our FQHC care gap closure guide covers the full workflow.

Routine eligibility and benefits questions

"Do you take my insurance?" is the most asked question at every practice in the country, and it has a factual answer that lives in the patient record and the payer roster. Voice AI handles this well because the scope is narrow and the source of truth is structured. Coverage determination for specific services is harder and sits in the failure zone below. Basic eligibility routing is a clear win.

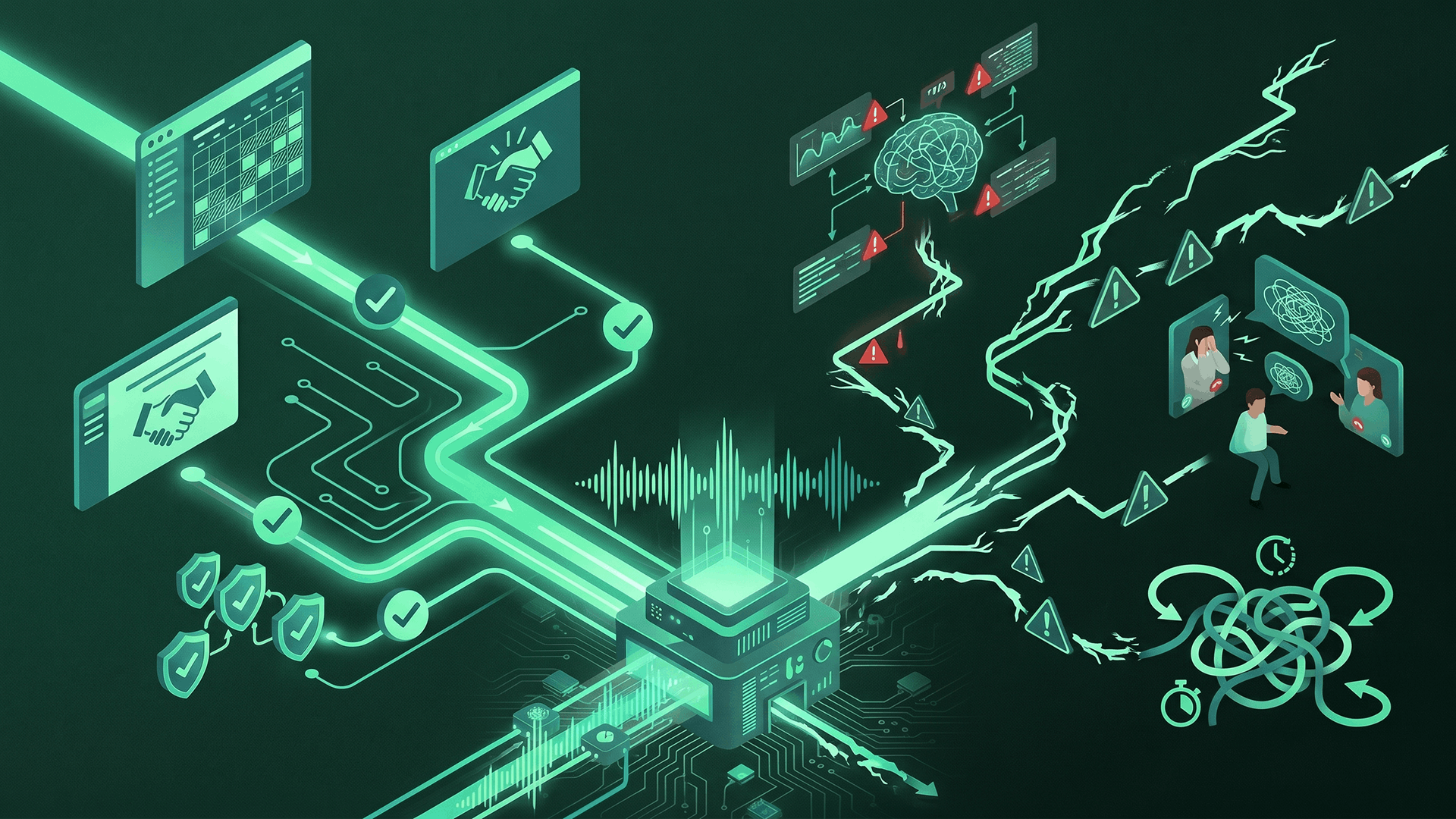

Where does voice AI still break in healthcare?

Three use cases where voice AI fails often enough in 2026 that operations leaders should plan around them, not into them. Each shares a structural feature: the conversation requires judgment that can't be pattern-matched from the EHR or the call script.

Complex clinical triage

A patient calls saying they have chest pain and shortness of breath. Another describes a post-surgical wound that looks "not right." A third asks whether they should take their blood thinner before tomorrow's procedure.

None of these should be handled by voice AI alone in 2026. The clinical judgment isn't in the EHR, the risk of a wrong answer is unacceptable, and the liability exposure is real. Vendors who claim autonomous "symptom triage" are usually describing a routing layer that hands off to a nurse. The handoff is the feature. If a vendor pitches autonomous clinical triage, that's a red flag.

Emotionally loaded conversations

A patient calls to schedule a first psychiatric evaluation. They sound hesitant. Halfway through, they mention they've been thinking about not being around anymore. The voice AI's scheduling script doesn't have a branch for this.

A patient calls to cancel a chemotherapy appointment. They don't say why. The AI confirms the cancellation and offers to reschedule. What the patient needed was a human to ask why they were cancelling.

These conversations happen every day in specialty practices, behavioral health groups, and oncology centers. Voice AI in 2026 is not equipped to handle them well, and deploying it into those moments damages trust more than it saves in staff time. The right pattern is a clean, early handoff: the moment the conversation leaves the happy path, a human takes over.

Multi-topic calls with frequent context switches

A patient calls to reschedule tomorrow's appointment, asks about a prescription refill, checks on a referral that was supposed to be sent last week, and wants to know if their balance was updated after their last insurance payment. Human coordinators handle this, tracking all four threads in parallel.

Voice AI in 2026 does not. It loses the thread. It resolves one item and treats the others as new calls. It transfers to a human who has to unpack what was and wasn't already addressed. Multi-topic handling is a real technical frontier, but practices that deploy voice AI as the primary inbound channel for all calls (rather than for specific, scoped workflows) run into this fast, and staff end up cleaning up AI-initiated handoffs instead of saving time.

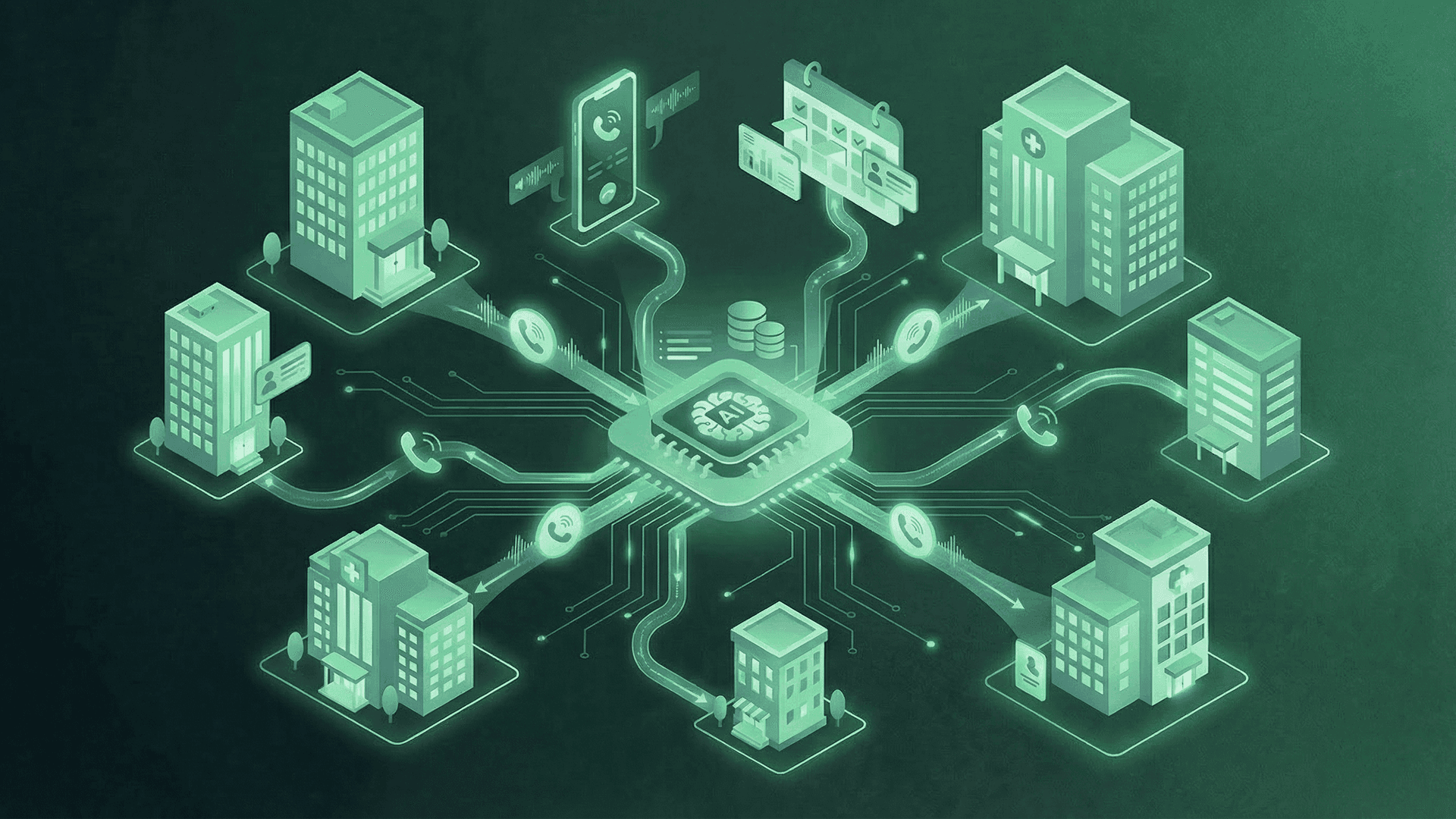

Why does "EHR integration" mean different things to different vendors?

The single biggest hidden variable in a voice AI evaluation is what the vendor means by "EHR integration." Most won't volunteer the distinction until you ask directly.

Three levels exist in the market:

Level 1: Read-only. The voice AI sees patient records and schedule availability but cannot write to the EHR. When a booking happens, a coordinator enters it manually the next morning.

Level 2: Partial write-back. The AI creates appointment requests or draft records that a coordinator reviews and confirms. Faster than Level 1, but still a handoff.

Level 3: Real-time bidirectional. The AI reads and writes directly in real time. A confirmed booking is a confirmed booking.

Only Level 3 changes the economics. Level 1 and Level 2 deployments create what might be called "expensive voicemail": the AI answers the call, but the coordinator workload hasn't dropped.

The one question that separates real scheduling infrastructure from a voice-enabled answering service: "When a patient books on your system at 9 PM, is that appointment confirmed in our PMS by 9:01 PM?" Anything other than an unconditional yes is Level 1 or Level 2.

Linear Health's voice AI is built as a Level 3 system with real-time bidirectional integration into athenahealth, Epic, eClinicalWorks, and 20+ other EHR systems. Our voice AI and EHR integration deep dive covers the full technical picture.

“We were losing thousands in revenue to no-shows and delayed scheduling. Linear Health contacted our patients faster than we ever could and our show rates improved dramatically.”

Voice AI vs. staff vs. IVR across ten real healthcare use cases

| Use Case | IVR | Staff | Voice AI (L1-L2) | Voice AI (L3) |

|---|---|---|---|---|

| After-hours scheduling | Voicemail only | Not available | Captures, books next day | Books in real time |

| Appointment confirmation | Low completion | Works | Works | Works |

| Recall and reactivation | Not possible | Capacity-limited | Needs coordinator follow-up | Full autonomous resolution |

| Basic insurance eligibility | Route only | Works | Works | Works |

| Coverage determination | Not possible | Works | Fails | Partial, human handoff |

| Referral intake outbound | Not possible | Days of delay | Under 5 minutes | Under 5 min, books on call |

| Complex clinical triage | Route only | Nurse required | Should not be used | Should not be used |

| Emotionally loaded conversations | Not possible | Required | Should not be used | Should not be used |

| Multi-topic inbound calls | Breaks down | Works | Fails | Partial, improving |

| Bilingual scheduling (EN + ES) | Press 2 for Spanish | Requires bilingual staff | Works | Works |

Five use cases where voice AI already wins on economics. Three where it should not be deployed at all. Two where the answer depends on integration depth.

See how voice AI works inside your EHR, not on top of it.

Book a 15-minute demo and we'll walk through your specific inbound and outbound workflows, which use cases to start with, and which to leave alone for now.

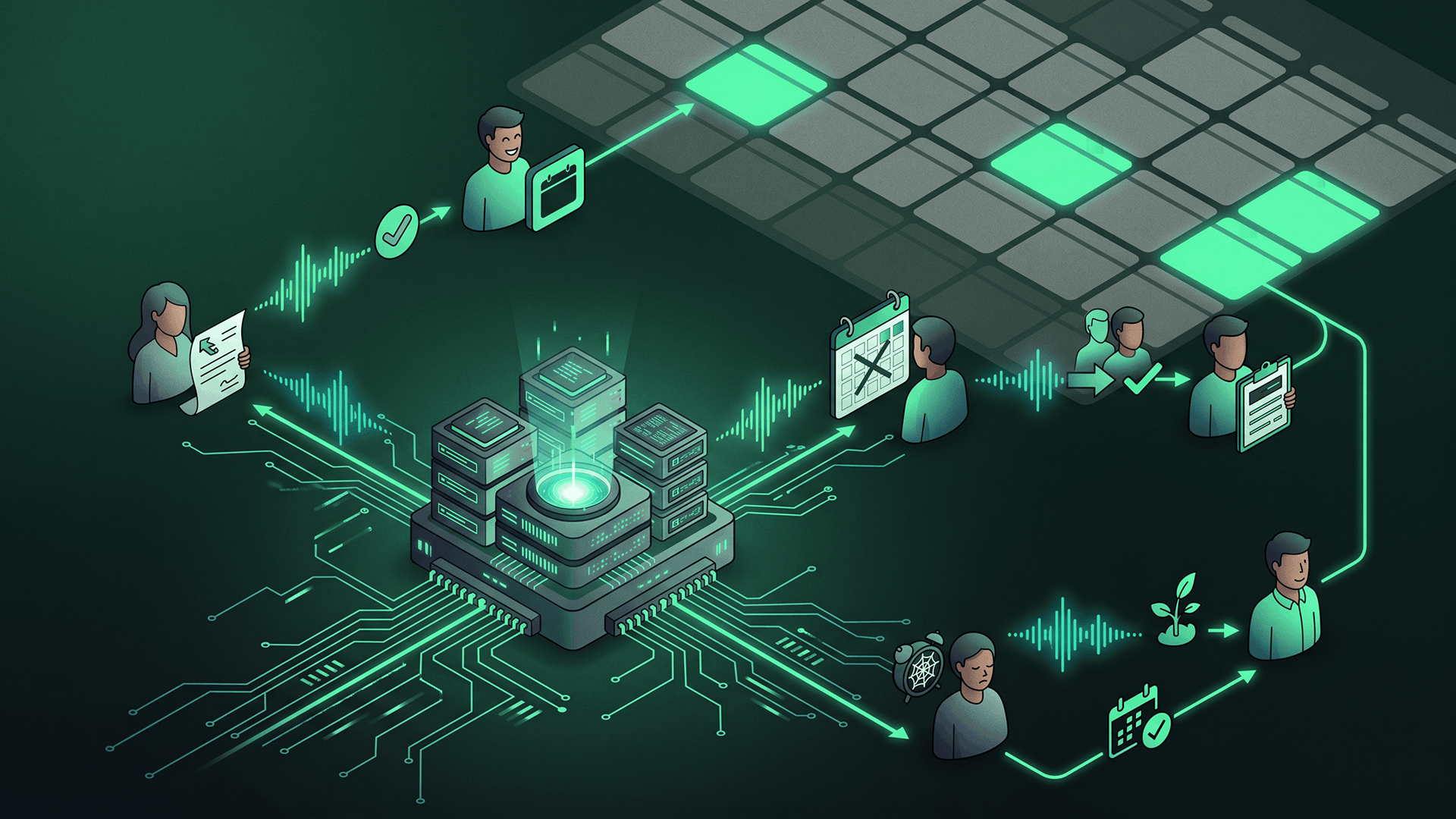

How does the coordinator role change after voice AI goes live?

Every practice asks the same question before deployment: are we replacing staff?

At practices that implement well, the answer is no, but the job changes. Voice AI removes the volume of routine calls that require no judgment: confirmations, voicemails, working a recall list one patient at a time, rescheduling by calling through a waitlist in sequence. What it leaves is the work that needs a human: multi-provider scheduling, clinical escalations, PA denials and appeals, referring provider relationships, and the handoffs the AI correctly identifies as needing human judgment.

Practices that get this right don't cut coordinator headcount. They stop hiring into a role that was 70 percent repetitive work, redeploy the team into higher-value coordination, and stop the burnout-driven turnover cycle that was costing them 30 to 40 percent of their coordinator workforce every year. Our conversational AI implementation guide covers the broader playbook across voice, SMS, and chat.

How should operations leaders evaluate a voice AI vendor in 2026?

Five questions that expose whether a vendor is selling Level 3 voice AI or a demo dressed up as one.

1. Show me a live booking from an outbound call into your customer's PMS, end to end. Not a demo environment. A real one. If they won't or can't show this, the integration isn't what they're claiming.

2. What is your call resolution rate across your customer base, not your best customer? The number you want is 80 to 85 percent. If they quote 95+, they're measuring a narrow definition. If they quote under 70, implementation quality is inconsistent.

3. Which use cases do you recommend starting with, and which do you recommend against? A vendor with real deployment experience will have an opinion. A vendor who says "we do everything" is selling a demo.

4. How do you handle the moment a patient says something your system wasn't trained for? Listen for the word "handoff." The answer you want involves early detection of out-of-scope conversations and clean routing to a human. The answer you don't want involves the AI trying to keep going.

5. What does your integration with our specific EHR write back to the record, and in what time frame? Specifics only. Nightly CSV exports or overnight sync means Level 1 or Level 2.

For how voice AI fits into broader scheduling infrastructure, including appointment template and provider roster prerequisites, see our scheduling solution page.

Who is voice AI the best fit for, and who should wait?

Best fit:

- Specialty practices processing 100+ inbound referrals per month, where speed of first patient contact determines conversion rates

- Multi-location groups running recall or reactivation campaigns against patient populations of 1,000+ overdue patients

- Practices with measurable after-hours call volume and abandonment rates above 10 percent

- FQHCs and primary care groups managing care gap closure outreach at population scale

- Organizations on athenahealth, Epic, eClinicalWorks, or another modern EHR that supports real-time bidirectional integration

Less ideal fit:

- Solo practices with under 30 calls per day, where the ROI case takes too long to materialize

- Practices whose primary problem is internal scheduling configuration rather than call handling. Voice AI that writes to a misconfigured schedule creates more cleanup than it saves.

- Clinical workflows dominated by emotionally charged conversations (end-of-life care, acute psychiatric intake, bereavement follow-up) where voice AI's current failure modes would damage trust

- Practices not ready to commit to the operational changes deployment requires, including workflow redesign and coordinator role restructuring

Voice AI in healthcare FAQ

Is voice AI a replacement for the front desk?

No. Voice AI handles 80 to 85 percent of inbound and outbound calls. The remaining 15 to 20 percent, including complex scheduling, clinical questions, and emotionally loaded calls, still requires human coordinators. Best results come from treating voice AI as a capacity multiplier, not a headcount replacement.

How long does voice AI take to implement?

2 to 4 weeks for initial deployment on straightforward scheduling use cases, with 90 days as the typical point where full inbound and outbound workflows are measurable. EHR integration complexity is the biggest variable.

Can voice AI handle bilingual patient conversations?

Yes for English and Spanish, which covers most bilingual demand in U.S. healthcare. Well-configured voice AI handles natural switching between the two languages within a single call. Other language support varies widely by vendor.

What happens to HIPAA compliance when a voice AI handles patient calls?

Vendors must sign a Business Associate Agreement (BAA) and meet the same HIPAA standards as any other technology handling PHI. Ask for their current BAA template, their SOC 2 Type II report, and their data handling practices. Missing or vague answers are disqualifying.

How do I know if my EHR is a good fit for voice AI integration?

The practical test is whether your EHR supports real-time API access for scheduling, patient records, and insurance eligibility. athenahealth, Epic, eClinicalWorks, and NextGen all support this well. Older or heavily customized systems may limit integration to Level 1 or Level 2, which changes the ROI case. Ask your vendor to show a live deployment on your specific EHR before signing.

Sami scaled Simple Online Healthcare to $150M and built a multi-specialty telehealth clinic across 20 specialties and all 50 states. Connect on LinkedIn.